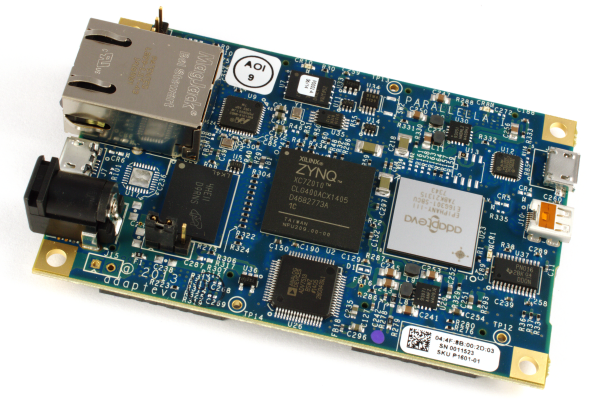

We at Erlang Solutions have had the pleasure of coming into contact with shiny Parallella board prototype and over the past few weeks we have been exploring how to utilise it as part of our experiments in the multicore domain.

In this blog post Edward Tate, our resident OpenCL hacker, introduces the reasons we have been interested in making use of the Parallela: Data Parallelism and OpenCL.

What is data parallelism?

Data parallelism is where multiple distinct units of data are operated upon simultaneously by the same task. On the CPU this would mean distributing the data across cores and executing an operation such as multiplication upon distinct units of memory at the same time. Given that CPUs nowadays contain 8 or more cores and data parallel problems may need to operate upon data which extends much past this boundary (an image for example may contain 1024*1024 pixels), it is necessary to devise mechanisms which can partition the data appropriately so that the cores can be efficiently utilised.

If we were to use Erlang processes to partition the data, we would be using Erlang’s runtime directly, and thus the ‘overhead’ of our problem relative to straight C code would be the garbage collector and the scheduler; the GC due to the space necessary to allocate processes which contain distributed data within a task, and the scheduler due to it being the mapping of processes to multiple cores.

We could envisage writing a piece of C or C++ code which efficiently caters for these types of problems without any scheduling or GC overhead. In fact this is what was developed as part of the ParaPhrase project (FastFlow)[1].

However, devices which already perform partitioning in hardware (such as a GPU) are well suited to solving these types of problems since a scheduling mechanism in software is no longer necessary. This is by and large what the Erlang world has resorted to when dealing with image processing and audio processing tasks in the past.

The Epiphany architecture does not have this type of partitioning of data across threads in hardware which raises the question of scheduling efficiently across cores in software; this is an area of research that we are interested in investigating since Erlang’s scheduler was not specifically designed with parallelism in mind from the get go.

Why Erlang?

Parallel data structures and algorithms are becoming much more important with the advent of multicore chips. Being aware of algorithms which have been parallelized and methods for operating upon data in parallel have become central to understanding how to effectively compute problems within these constraints.

Erlang is a functional programming language which was designed with concurrency oriented programming in mind, meaning problems which require the explicit programming of processes and their communication, or the utilisation of patterns (or behaviours) which provide generic means of representing the problem.

We wanted to extend Erlang’s applicability to the domains of video processing, audio processing and image processing, which inherently contain problems with massive amounts of data parallelism, so naturally we looked to GPUs and other hardware devices such as the Epiphany devices.

Intensional Parallelism

In addition to wanting to attain efficiency where data parallel algorithms are concerned, we have been investigating different ways of expressing parallel programs such that they are comprehensible and to provide a methodology which allows for the debugging of such programs without too many headaches.

One example of an alternate approach is the GLU Lucid language developed at SRI’s Computer Science Laboratory which allows for expressing parallel algorithms in a simple form, where Lucid acts as a coordination language and underlying sequential C code is responsible for operating upon the data in parallel.

If you wish to find out more about parallelism in Lucid please see [2], [3] and [4].

The Parallella has been of great interest to us from its inception, since we have been developing such a coordination DSL in-house for quite some time that we envisage mapping to the Epiphany architecture in the future.

OpenCL

Another idea which we explored was to make use of OpenCL within Erlang. We made use of the great OpenCL binding available at [5] for quite some time as part of the ParaPhrase project. There we developed an application framework which allows for the execution of arbitrary OpenCL kernels within a node. As an extension to this project we wanted to see how the Parallella would fare against a GPU in terms of performance and more importantly power consumption since power consumption in data centres is becoming a major issue.

More information about OpenCL on Parallella can be found at this blog post.

On the Parallella so far, we have managed to create our own OpenCL binding (with some help from David Richie from Brown Deer Technology) in the form of an Erlang NIF module. A NIF is a function that is implemented in C instead of Erlang which behaves more or less like an Erlang function. More information about NIFs can be found at [6]. We are using this in an upcoming demo for the Erlang User Conference in Stockholm next month.

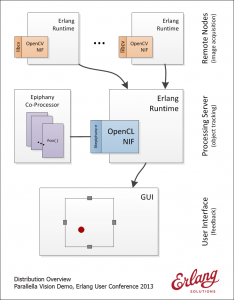

The application area we have chosen is vision systems (object tracking) and here’s a sneak peek at the overall architecture of our system:

(click thumbnail for a much larger version)

Future plans

In the next blog post, we will talk about the implementation details and the challenges we have tackled during the development of this vision demo. We might even have a short video of it in operation!

Stay tuned for more details and feel free to get in touch if you have any queries or comments.

References

[1] http://sourceforge.net/projects/mc-fastflow/[2] http://soft.vub.ac.be/soft/edu/mscprojects/2010_2011/lucid-on-tilera

[3] http://www.csl.sri.com/papers/sri-csl-94-06/sri-csl-94-06.pdf

[4] http://books.google.co.uk/books/about/Multidimensional_Programming.html?id=4f3WZaLgL9gC

[5] http://www.erlang.org/doc/man/erl_nif.html

[6] http://github.com/tonyrog/cl

Really impressive! Truly heterogeneous programming for a truly heterogeneous platform.:-) Really looking forward to seeing the demo. Will this be the first example of an Erlang/OpenCL combo on an embedded platform?

This is a fascinating post. Thank you for the details and the highly educational links.

… follow-up question: I’m particularly interested in the partitioning problem.

When you speak of HW partitions (e.g. on a GPU), is it correct to say that the algorithms leveraged to affect the partitioning are highly-optimized for specific workloads or are higher-order analysis techniques employed to achieve optimal partitioning for arbitrary workloads? I suspect the former but haven’t researched this topic extensively and am curious.

Thanks, Chris

Loopy syntaxes aside, I am not a fan of Erlang or any other declarative programming language. They seem more like programming languages by mathematicians for mathematicians. While the math function-esq style makes things more abstract (not sure it’s in a good way), this makes the reliance on compilers far greater. One thing i’ve learned while programming for embedded systems is that compilers are dumb. A simple example is in C++, using the “register” keyword (deprecated in C++11?!) in the right places (most everywhere) can make the generated machine code smaller and faster but without it, even at the max optimization levels, the machine code is longer and slower because compilers are dumb. That’s just one small example. Now, push control flow onto the stack of tasks (get it? push, stack ^_^) and the generated machine code is not going to be pretty much less small. This is a problem when you have a small amount of memory to load a program into. Maybe when we invest in writing smart[er] compilers then declarative programming may make more sense. For now, they are just languages for mathematicians and academics.

OpenCL seems like a good choice if one is insistent on a single control flow. Though, there is value in crafting code for each core. I’m a fan of XC because it let’s you do just that with ease.

While I agree that compilers for small architectures do sometimes generate suboptimal code, this is not really relevant in this case as we are not compiling Erlang down to Epiphany machine code. We are running Erlang on top of Linux that runs on the ARM cores to orchestrate the concurrent data flow and use OpenCL kernels to do the actual computation. We will also be experimenting with ditching OpenCL and doing things with bare Epiphany cores in the future.

Regarding the syntax, well, it is different but it’s also very concise so there really isn’t much to it. The main issue is not the syntax itself but the functional programming paradigm Erlang uses. If you are familiar with functional programming, figuring out the Erlang syntax is a minor exercise. If you come from an imperative programming world, there are a few things you need to wrap your head around like referential transparency, avoiding mutable data, liberal use of recursion etc. to fully understand how Erlang works. If you’re interested, LYSE is a great resource to get started: http://learnyousomeerlang.com/

As a hardware engineer (far from a theoretical mathematician!) I use Erlang to design embedded systems that are distributed, concurrent and fault tolerant — sort of analogous to a “top level” description in hardware description languages, with the added benefit of supervisors to deal with failures and the ability to talk to other nodes and distribute things around. This is just one use case that is relevant outside academia, there are a number of other areas that Erlang is actively used today as well.

Thanks Andreas, I believe this is the first time Erlang and OpenCL are running together on an embedded platform, which is quite exciting 🙂

Glad you found it useful, Chris.

(I’ll let Ed take the next question as it is his research area)

a) I was talking about all compilers, not just for embedded systems, that’s just where I is most visible. The same problems still exist in compilers for x86 and x86_64!

b) You misunderstand, i’m not trying to say that there is something “wrong” with the paradigm, only that it’s translation to machine code is currently quite crude and problematic to compilers as less complex translations (from simple procedural programming languages like C) to machine code are implemented with limited efficiency.

c) I did see that Erlang wasn’t actually used on the chip, I was simply making a case for not doing so in the future because it seemed like you were leaning toward doing just that.

d) eeeee!

e) you rang?

be sure to post a video of the demo. 🙂

Hi Chris,

Thank you for your interest. The algorithms we are using are written in a variant of TransLucid which deals with arrays of unbounded extent on the CPU, and OpenCL kernels are used where it makes sense. The TransLucid algorithms adapt to varying workloads whilst using the CPU. Where the GPU is concerned, the dimensionality of the inputs and outputs need to be fixed to the NDRange call that is made which executes an OpenCL kernel. Allowing for the dimensionality of variables to be constrained by a specific range has been investigated in a new paper submitted by John Plaice for the spatial computing 2013 workshop. To answer your question more specifically, in order to achieve optimal partitioning for arbitrary workloads by using the GPU, the extent of the input / output arrays must be known during evaluation, which we currently do not support but are looking to integrate in the near future. The situation is more interesting when it comes to the Parallela since it allows for arbitrary code to be run per core, which means that each core could run an instance of the evaluator, thus giving us the ability to perform idealised partitioning. It is the perfect platform for dataflow oriented programming languages.

I hope this has provided some clarity.

Compiler technology is pretty sophisticated these days and I don’t see a point in writing *everything* in C (and I happen to like C very much) just because it translates well into machine code and any other higher level language will result in the loss of a few CPU cycles. Taking that one step further would be to advise switching over to Assembly as it’s “bare metal”, which is not feasible and/or sane.

There are certain applications where it makes perfect sense to squeeze out every bit of performance out of your system and you should definitely use C there, not Erlang (or Java, Python, etc.) If you look at our system diagram, you will see that we are doing just that: Using Erlang for coordination/distribution and dealing with concurrency, areas where it is excels in, and C bindings for OpenCL (number crunching) and OpenCV (webcam interface).

It simply boils down to this: Use the right tool for the job.

Are there any plans to port Erlang to Epiphany (not ARM) ?

[5] and [6] are swapped, should be cl then erl_nif per the text.

Thanks very much for the details replay, Edward. I understand about half of your explanation on first read; the balance will require more thought and some offline investigation to parse.

>It is the perfect platform for dataflow oriented programming languages.

Indeed. My interest here derives from work on a new data-flow modeling language (declarative description language – not a programming language per se; a subtle but important distinction).

Erlang is fascinating. I was first introduced about a year ago by friends at http://soundrop.com (Norway) who leverage an Erlang-based back end to affect global synchronization of audio streams atop Spotify. Parenthetically if you like music, Soundrop is cooler than cool. Likely you have met these guys or know of their work already – I don’t get the impression that the Erlang community is yet huge. Based on what I’ve seen and learned however, that’s likely to change.

Ed,

great brain -boom

franz

Nice work! Looking forward to seeing additional detail.