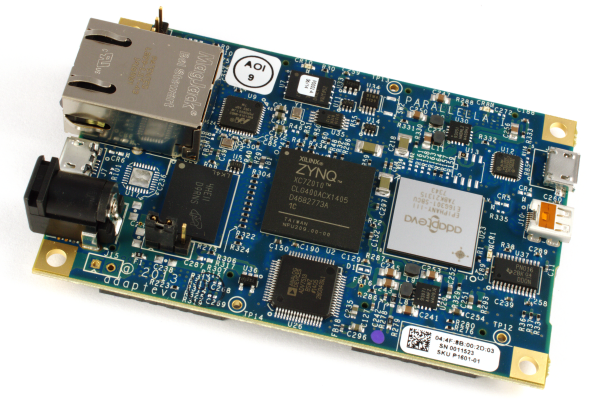

The Parallella Board

• 18-core credit card sized computer

• #1 in energy efficiency @ 5W

• 16-core Epiphany RISC SOC

• Zynq SOC (FPGA + ARM A9)

• Gigabit Ethernet

• 1GB SDRAM

• Micro-SD storage

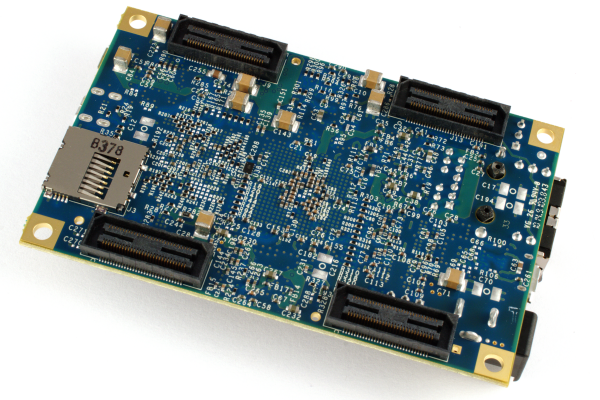

• Up to 48 GPIO pins

• HDMI, USB (optional)

• Open source design files

• Runs Linux

• >10,000 boards shipped

• Starting at $99